Fish Food 679: Organisational knowledge in the age of AI

Engineering context to think differently, using LLMs in research, AI as 'System 3' thinking, and what's going on with ad agencies?

This week’s provocation: A new knowledge architecture for a new age

I’m not a fan of trendy neologisms like ‘prompt engineering’. Marvin Minsky once described how ‘suitcase words’ (high-level and abstract terms) often contain a variety of different, sometimes jumbled meanings. And there’s a lot of suitcase words around AI right now. But confused as they might be, these terms can also carry with them a fundamentally interesting technique or concept.

‘Context engineering’ is one such suitcase phrase. We can define it as the discipline of designing, structuring, curating, managing and dynamically feeding the most relevant information into an AI’s context window so that it can perform complex, multi-step tasks accurately and without confusion or misdirection. At the simplest level we might think of this as creating the ‘knowledge ecosystem’ for an AI agent. For example, designing the agent in a way that it searches for and pulls in only the specified data or documentation relevant to that stage in the process, rather than giving it everything at once (dynamic retrieval or RAG). Or designing what the agent needs to remember from its short-term history versus what it needs to know from longer-term historical perspective (memory management). We might also design which systems and tools the agent can access and how the results of these tools are fed back into the thought process of the AI (tool orchestration). And we might even decide to summarise or delete irrelevant data or information to avoid overloading the AI’s context window (its finite thinking capacity) and keeping it focused on the task in hand.

All of this is the ‘context engineering’ needed for an agent to move through a continuous, well-informed loop of thinking, acting, observing and repeating, without losing focus. It grounds agents in proprietary, real-time data, gives them the information needed to make good decisions in an efficiency way, and enables scaled, standardised architectures. Setting this up right is not only about pointing an agent at a database, but defining your business logic for how the agent should make decisions and take actions based on the information available.

But I think the idea of architecting knowledge for AI goes far deeper than just a technical practice. The quality of every AI-assisted decision, recommendation, and output is bounded by the quality of context it receives. This makes the curation of organisational knowledge (what gets captured, how it’s structured, how relationships between ideas are maintained) a fundamental strategic capability.

From knowledge management to knowledge architecture

In my post about the future of learning and development one of the core distinctions I used was the difference between productive and generative learning. The former is about efficiency, applying existing knowledge and getting to known answers faster (‘find-it-out’ use cases). The latter is about creating new understanding, reasoning through ambiguity, generating novel options (‘figure-it-out’ use cases). AI can supercharge both of these opportunities but businesses are in danger of getting stuck in productive mode. They’re building better search engines for their own stuff when the real opportunity is in generative mode - using AI to reason with the organisation’s accumulated knowledge to produce genuinely new thinking.

Another way of thinking about this is Chris Argyris’s distinction between single-loop and double-loop learning. Single-loop asks ‘are we doing things right?’ (productive). Double-loop asks ‘are we doing the right things?’ (generative). In the integration of AI, many organisations are optimising for the first and barely even thinking about the second. But each mode requires a fundamentally different approach to how you structure and curate knowledge.

Productive context

Traditionally, knowledge management has been about storage, retrieval and sharing. Helping humans find things. Good context engineering here is about making the organisation searchable to AI. The goal is to externalise and structure what the organisation collectively knows so that AI can surface it on demand. This is not just documents, but learned experience and heuristics. It’s like building an organisation’s long term memory.

In the age of AI Nonaka and Takeuchi’s SECI model, a classic knowledge management framework, takes on a whole new meaning.

Socialisation: tacit knowledge passes between people through shared experience, observation, and practice, without ever becoming codified. This is like the apprentice watching the master.

Externalisation: tacit knowledge gets articulated into explicit form - concepts, frameworks, metaphors, documents. Like the master documenting the principles behind the craft in a manual.

Combination: explicit knowledge is reorganised, synthesised, and systemised by combining it with other explicit knowledge. Akin to assembling the manual into a training curriculum.

Internalisation: explicit knowledge gets absorbed back into tacit form through practice and doing, becoming embodied skill (the apprentice has the training and then does it until it becomes instinct).

Socialisation and internalisation are likely to remain uniquely human, but AI can really help with externalisation, for example by turning transcripts and post-project reflections into structured knowledge assets and capturing decision rationale. It can also supercharge combination, by taking explicit knowledge that already exists somewhere and reorganising it into more useful configurations faster than any human analyst could. The potential here is about breadth and accessibility, making sure AI can draw from the full range of what the organisation knows, not just what’s been formally documented. Enterprise search tools like Glean and Google Cloud search/Vertex AI search are a sign of things to come. But to realise their full potential it needs deliberate design to capture and structure organisational knowledge to make it searchable not only by keywords but also by user intent.

Generative context

The goal here is less about retrieval and more about recombination. It’s about giving AI enough rich, diverse, and well-structured context that it can help humans identify patterns that they wouldn’t otherwise see, to generate scenarios, challenge assumptions, and produce genuinely novel strategic thinking.

In the 1960s Arthur Koestler wrote about bisociation, or the idea that creative breakthroughs come from connecting frames of reference that don’t usually meet. Routine thinking or optimisation operates within a single frame of reference. It is single loop learning. You move along established lines of practice and your job is to do what was done before as efficiently as possible, or perhaps with marginal improvements.

Creativity is combinatorial. Creative thinking happens at the intersection of two previously unconnected frames. Koestler uses the example of humour. A punchline in a joke forces you to reinterpret the setup within a completely different frame than you were expecting. Making abductive leaps is a uniquely human thing (LLMs can’t jump - PDF). AI cannot bisociate frames that exist only as tacit knowledge (embodied understanding, intuitive group dynamics, aesthetic judgement from years of doing). But AI can be an excellent engine for bisociation within the realm of explicit or documented knowledge. So with generative context you're deliberately juxtaposing diverse knowledge domains to surface new combinations, new perspectives, and give humans the source material to inspire those creative leaps forwards. An AI working with marketing documentation alone will recombine marketing thinking. But feed it marketing strategy + anthropological research + game theory + competitive intelligence + proprietary customer patterns, and now you're creating conditions for genuine bisociation.

This is not about better search, but rather it’s about creating the conditions for better thinking. As just one example, Pier’s Fawkes is building Fodda: ‘a catalog of expert-built context graphs. Each graph adds a distinct perspective, giving AI systems structured, explainable knowledge they cannot learn from public data alone’. It’s like a series of knowledge feeds that gives a business a diverse set of expert perspectives (full disclosure - Piers talked me through this and it’s such an interesting idea). The context engineering challenge with generative is not about access and retrieval, but about curation, diverse inputs and juxtaposition. We’re not just making knowledge available, we’re deliberately combining knowledge sources in ways that provoke new thinking. We’re structuring knowledge so that AI systems can reason with it.

My belief is that this will be significant source of competitive advantage. The quality of generative AI output is directly proportional to the richness of the context you feed it. An AI reasoning with your proprietary market data, your customer insights, your strategic history, your competitive intelligence, and your cultural context will produce fundamentally different (and better) thinking than one working with generic knowledge alone.

Knowledge as a living system

Most organisations will naturally gravitate toward the productive side (it’s more tangible, easier to measure, and perhaps faster to show ROI) but the generative side is where the real differentiation lies. An organisation that becomes really good at the productive side (knowledge access and retrieval) will be efficient, well optimised and well-versed in best practice. A business that becomes good at the generative side (recombination and novelty) will be creative, innovative and trailblazing. Both are necessary.

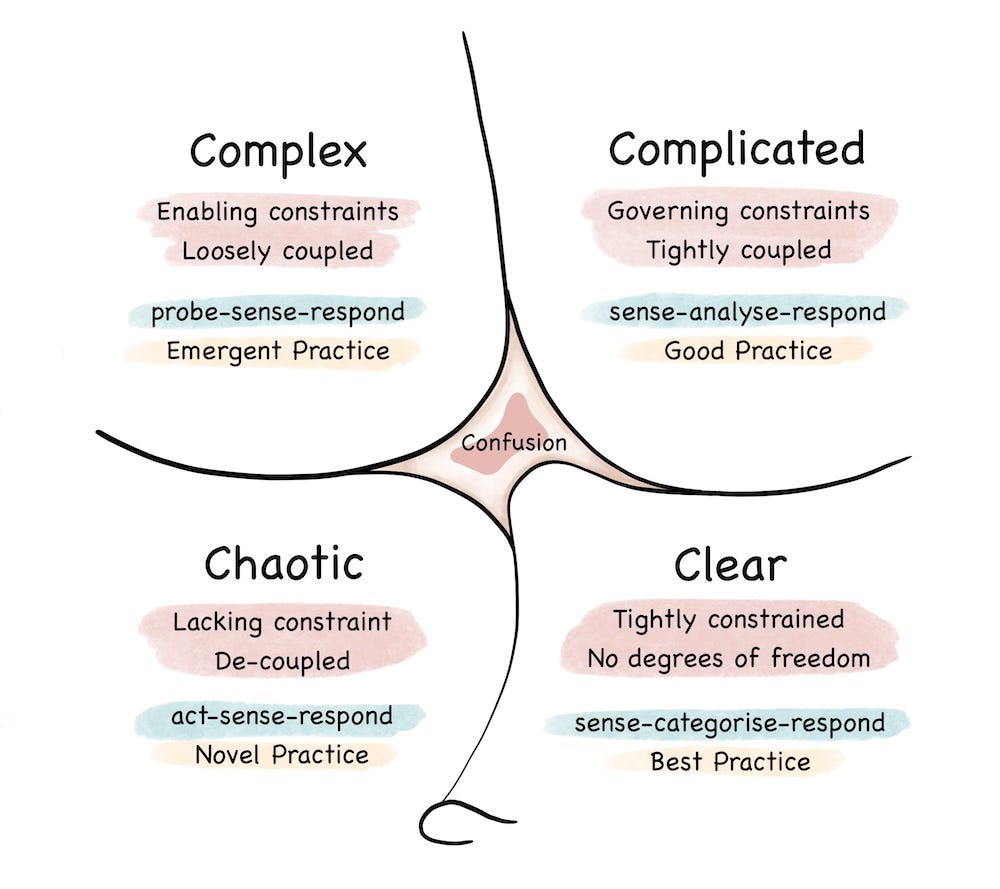

Dave Snowden’s Cynefin framework demonstrates the trap that most organisations are at risk of falling into. They’re treating all AI applications as if they live in the ‘clear’ or ‘complicated’ domains where best practices exist and answers can be found through analysis. This makes sense for where existing thinking or known information will suffice. But much strategic work lives in the ‘complex’ domain, where cause and effect relationships are less obvious, new thinking is required, and you need to probe-sense-respond. Here, the knowledge architecture is not about making information searchable on demand but providing diverse, sometimes contradictory, contextually rich knowledge that AI can synthesise and reason across to help you see patterns, generate scenarios, and identify assumptions you didn’t know you were making. The mistake is architecting all your knowledge for retrieval (clear or complicated) when your competitive battles are being fought in complexity.

Thinking more deeply about this raises an awkward question around who owns knowledge architecture in the organisation. It's not purely IT (who may build infrastructure, but not epistemology). It's not purely HR (L&D manages training, but not organisational intelligence). It's not purely Strategy departments either (they make use of knowledge, but they don't curate it). Right now the answer is probably no one, which is a problem. But whoever takes this on will need to develop strategy around both productive and generative knowledge:

Productive knowledge (retrieval): Investing in externalisation and combination. Capturing tacit knowledge and making it explicit. Using AI to turn meeting transcripts, project retrospectives, and Slack conversations into structured, searchable knowledge assets. The goal is making your organisation’s collective intelligence findable.

Generative knowledge (recombination): Deliberately curating diverse knowledge domains and structuring them for cross-pollination. Going beyond function-specific archives to connect with other knowledge domains. The goal is creating the kind of intersections that can generate new and emergent thinking.

Most organisational knowledge decays. Written strategies become outdated, process playbooks drift away from reality, and institutional memory fragments. The old model treated knowledge as something you produce and store. We need to move to a new model that treats knowledge as something that needs to be cultivated and grown. A living system that requires ongoing curation, pruning, updating, and connection-making. In this new world we shouldn’t treat organisational knowledge as an inventory of information. It’s more like a supply chain - dynamic, flowing, and requiring constant management.

Ultimately it’s not going to be the businesses with the most knowledge that succeed, it will be the ones whose knowledge is structured for better thinking.

Rewind and catch up:

The value of craft in the age of Agentic AI

Scenario planning with AI (updated)

The Coming Revolution in Learning

Photo by Patrick Tomasso on Unsplash

If you do one thing this week…

Inspired by Alan Klement’s post over on LinkedIn, I wrote out a simple methodology for using LLMs in rigorous research for this week’s IPA AI in Strategy course. The method involves creating custom XML methodology files defining things like epistemological and ontological assumptions, ‘Do Not Use’ terms, and specific operational definitions. I thought it would be useful to share it here as well so you can see/download the guide here (for free).

Links of the week

‘The findings highlight that not all AI-reliance is the same: the way we interact with AI while trying to be efficient affects how much we learn’. Apropos of last week’s post on protecting craft in the age of AI a study from Anthropic (in the context of coding) shows that using AI can significantly decrease mastery, but also that this is not inevitable. Used in the right way AI can also help build comprehension. HT Nicholas Thompson

Meanwhile, here’s an interesting study from the University of Pennsylvania positing how AI use can become ‘System 3’, or ‘artificial cognition that operates outside the brain’. Adopting AI outputs without scrutiny or judgment, or what the researchers call ‘cognitive surrender’, overrides both System 1 (intuition) and System 2 (human reasoning) HT Ethan Mollick

Some talk this week about the latest IPA Census which shows staff numbers at creative agencies fell more than 14% in 2025. That’s no small shift. Interestingly almost half of that figure (48%) were due to resignations, and 20% due to redundancy. Those left appear to be working harder than ever which, as Sean Betts notes, seems to indicate that job cuts may be happening ahead of the expected efficiency gains from AI.

For those that have left recently and are looking at freelance work, Richard Huntington shared (renowned planner) Jon Leach’s ‘The Hustlers toolkit’, written in the heat of COVID. I wrote up my own advice for new freelancers here.

Meanwhile S4 Capital agency Monks is pivoting to a subscription model. There’s no doubt that the billable hour model is no longer fit for purpose but it will be interesting to see if they can make this stick

One of my favourite things to do is the quarterly Digital Shift marketing trends webinars for Econsultancy. I’ve been doing them for 14 years and still get a lot from the process. My next webinars are happening on Thursday 19th March and I’ll be talking about the latest research on how AI is changing the world of work, what’s going on with agentic AI, and context engineering (see above). You can sign up (for free) here.

And finally…

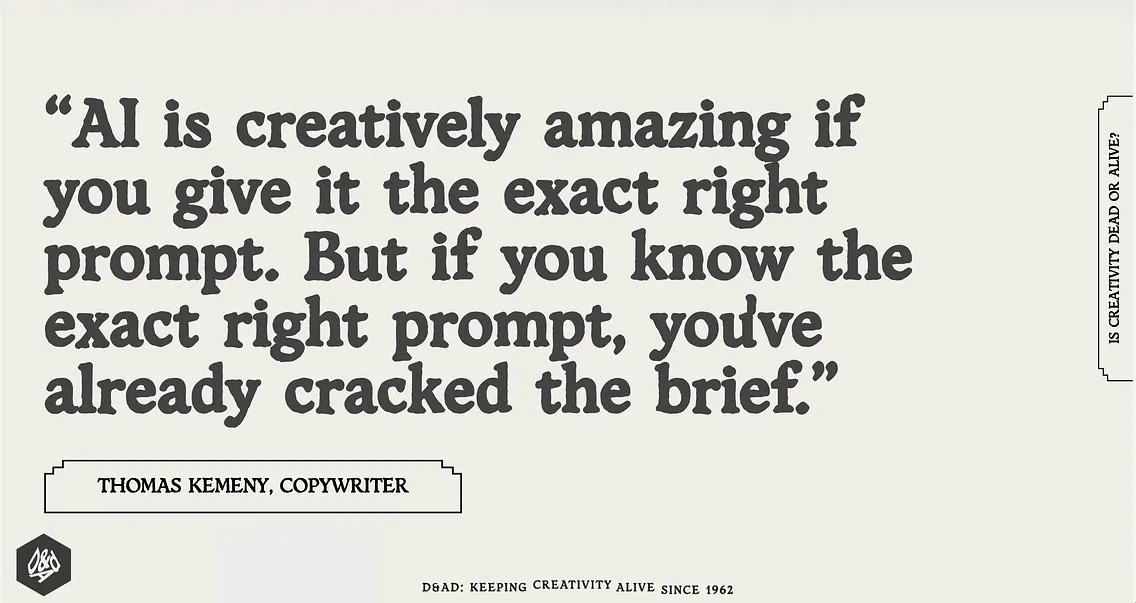

I liked this quote from copywriter Thomas Kemeny, shared by Vikki Ross.

Weeknotes

This week I ran the latest IPA AI in Strategy and Planning course at the mothership in Belgrave Square, and I also did a bunch of proposals and outlines for upcoming speaking and workshop gigs. And it rained a lot (again). Next week I’m doing a talk at VCCP on AI in strategy, and also co-facilitating a session with a global lead at Infosys for a few hundred of their leaders on the topic of ‘Leadership in the age of AI’. Interesting just how much of my work is AI focused in some way now.

Thanks for subscribing to and reading Only Dead Fish. It means a lot. This newsletter is 100% free to read so if you liked this episode please do like, share and pass it on.

If you’d like more from me my blog is over here and my personal site is here, and do get in touch if you’d like me to give a talk to your team or talk about working together.

My favourite quote is from the renowned Creative Director Paul Arden: ‘Do not covet your ideas. Give away all you know, and more will come back to you’. This captures what I try to do every day.

Only dead fish swim with the stream.

Thoughtful piece. I agree that the real shift is from knowledge management to knowledge architecture, and that retrieval alone is not enough. The distinction between productive context and generative context is especially important.

One thing I would add from my Columbia research is that enterprise AI also needs behavioral organizational context, not just better-curated documents. A lot of critical knowledge lives in trust, influence, informal authority, and who people actually go to when process breaks. That is a big part of what we are building at www.behaviorGraph.com: a people-side context layer to help AI understand how work really moves inside organizations.

Re: System 3, it has been argued that humans’ fundamental trick is to think not just with their own brain, but with the world. Also, by their definition, every time that we surrender to another person’s opinion without thinking about it would count as System 3. So maybe it doesn’t even exist (because it’s not thinking, and it’s not new to Kahneman’s model.)